U of T’s Citizen Lab, international human rights program explore dangers of using AI in Canada’s immigration system

Published: September 26, 2018

Canada is fast becoming a leader in artificial intelligence, with innovators across the country finding new ways of using automation for everything from cancer detection to self-driving cars.

According to a joint report by the international human rights program (IHRP) in the Faculty of Law and Citizen Lab, based in the Munk School of Global Affairs & Public Policy, the Canadian government is beginning to embrace automation too, but if used irresponsibly, it can trample on human rights.

The report looks at the ways the Canadian government is considering using automated decision-making in the immigration and refugee system, and the dangers of using AI as a solution for rooting out inefficiencies.

“The idea with this project is to get ahead of some of these issues and present ideas and policy recommendations and best practices in terms of, if you're going to be using these technologies, how they need to accord to basic human rights principles so they do good and not harm,” says Petra Molnar, one of the authors of the report and a technology and human rights researcher at IHRP.

Read the full report

Read Petra Molnar's op-ed in the Globe and Mail

Molnar, along with co-author Lex Gill, who was a Citizen Lab research fellow at the time, found that the Canadian government is already developing automated systems to screen immigrant and visitor applications, particularly those that are considered high risk or fraudulent.

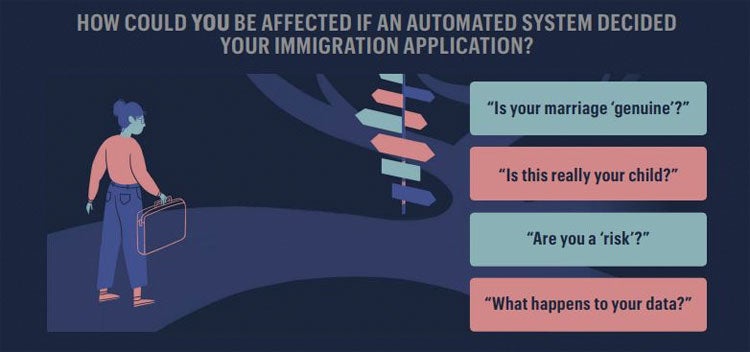

“But a lot of this is being talked about without definitions,” says Molnar. “So what does high risk mean? We can all imagine which groups of travellers would be caught up under that. Or fraudulent – how are they going to determine whether a marriage is fraudulent or not or if this child is really your child? There are no parameters.”

The report includes a taxonomy of immigration decisions. "We take the reader through what it would look like if you are applying to enter Canada – all the different considerations you have to think about," says Molnar. "Each section is broken down by applications and questions of how these technologies might actually be impinging on human rights." (Illustration by Jenny Kim/ Ryookyung Kim Design)

Finding concrete information about government practices has proven to be tough. Molnar says the research team filed 27 access-to-information requests but were still awaiting response as of writing the report.

The problem at the core of automation, says Molnar, is that algorithms are not truly neutral.

“They take on the biases and characteristics of the person who inputs the data and where the algorithm learns from,” she says. “The worry is it's going to replicate the biases and discriminatory ways of thinking the system is already rife with.”

Read about the report in The Toronto Star

The authors also looked at case studies from around the world of governments using AI for immigration-related decision-making.

“No one has done a human rights analysis of these technologies, which to me is kind of bonkers,” says Molnar. “How are these technologies actually going to impact people’s daily reality? That's where we come in.”

The report highlights international cases of algorithms failing to protect the rights of the people affected by immigration decisions. This included the U.S. Immigration and Customs Enforcement (ICE) setting an algorithm to justify 100 per cent detention of migrants at the border, and the U.K. government wrongfully deporting over 7,000 students who they claimed cheated on English language equivalency tests that were administered using voice recognition software. The automated voice analysis was proven to be incorrect in many of the cases when compared to human analysis.

“There are all these ways the algorithmic decision-making tool can be just as faulty but we view them with perfection so without realizing, we risk deploying them irresponsibly and ending up where possibly we were better off with human decision-makers,” says Cynthia Khoo, Google policy fellow at Citizen Lab and one of the reviewers of the report.

(Illustration by Jenny Kim/ Ryookyung Kim Design)

The report offers a list of recommendations the authors hope will be adopted by the Canadian government, including the establishment of an oversight body to monitor algorithmic decision-making and informing the public about what AI technology will be used.

The research team hopes to update the report once the access-to-information documents are received and to continue its work on automation by looking at other uses of AI by the federal government, including in the criminal justice system, says Molnar.

For both IHRP and Citizen Lab, the nature of this report is unusual – focusing on potential harm and not existing violations of human rights, says Samer Muscati, director of IHRP.

“This gives us great opportunities to have impact right from the start before these systems are finalized,” he says. “Once they're in place, it's much harder to change a system than when it's actually designed.”

Ahead of the report’s publication, IHRP and Citizen Lab met with government officials in Ottawa to present the report.

Muscati hopes beyond these meetings, the report can make a real difference.

“It's when we see practices and policies being changed – that's when we know we're having some impact."