U of T researchers develop video camera that captures 'huge range of timescales'

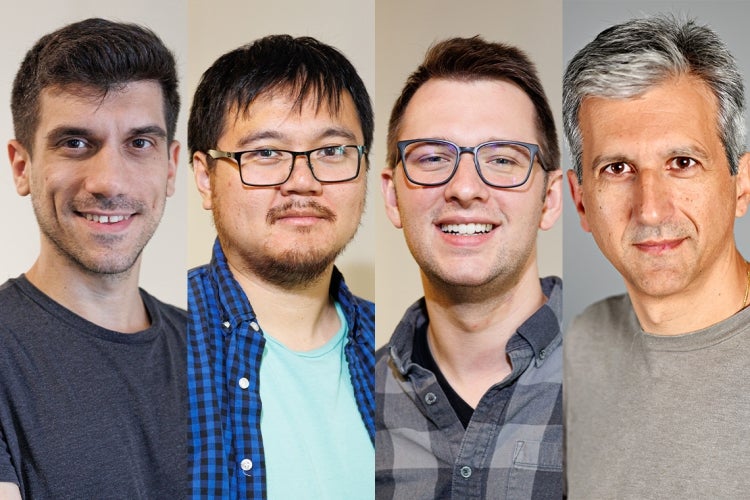

Researchers Sotiris Nousias and Mian Wei work on an experimental setup that uses a specialized camera and an imaging technique that timestamps individual particles of light to replay video across large timescales (photo by Matt Hintsa)

Published: November 13, 2023

Computational imaging researchers at the University of Toronto have built a camera that can capture everything from light bouncing off a mirror to a ball bouncing on a basketball court – all in a single take.

Dubbed by one researcher as a “microscope for time,” the imaging technique could lead to improvements in everything from medical imaging to the LIDAR (Light Detection and Ranging) technologies used in mobile phones and self-driving cars.

“Our work introduces a unique camera capable of capturing videos that can be replayed at speeds ranging from the standard 30 frames per second to hundreds of billions of frames per second,” says Sotiris Nousias, a post-doctoral researcher who is working with Kyros Kutulakos, a professor of computer science in the Faculty of Arts & Science.

“With this technology, you no longer need to predetermine the speed at which you want to capture the world.”

The research by members of the Toronto Computational Imaging Group – including computer science PhD student Mian Wei, electrical and computer engineering alumnus Rahul Gulve and David Lindell, an assistant professor of computer science – was recently presented at the 2023 International Conference on Computer Vision, where it received one of two best paper awards.

“Our camera is fast enough to even let us see light moving through a scene,” Wei says. “This type of slow and fast imaging where we can capture video across such a huge range of timescales has never been done before.”

Wei compares the approach to combining the various video modes on a smartphone: slow motion, normal video and time lapse.

“In our case, our camera has just one recording mode that records all timescales simultaneously and then, afterwards, we can decide [what we want to view],” he says. “We can see every single timescale because if something’s moving too fast, we can zoom in to that timescale, if something’s moving too slow, we can zoom out and see that, too.”

Professor Kyros Kutulakos (photos supplied)

While conventional high-speed cameras can record video up to around one million frames per second without a dedicated light source – fast enough to capture videos of a speeding bullet – they are too slow to capture the movement of light.

The researchers say capturing an image much faster than a speeding bullet without a synchronized light source such as strobe light or a laser creates a challenge because very little light is collected during such a short exposure period – and a significant amount of light is needed to form an image.

To overcome these issues, the research team used a special type of ultra-sensitive sensor called a free-running single-photon avalanche diode (SPAD). The sensor operates by time-stamping the arrival of individual photons (particles of light) with precision down to trillionths of a second. To recover a video, they use a computational algorithm that analyzes when the photons arrive and estimates how much light is incident on the sensor for any given instant in time, regardless of whether that light came from room lights, sunlight or from lasers operating nearby.

Reconstructing and playing back a video is a matter of retrieving the light levels corresponding to each video frame.

The researchers refer to the novel approach as “passive ultra-wideband imaging” that enables post-capture refocusing in time – from transient to everyday timescales.

“You don’t need to know what happens in the scene, or what light sources are there. You can record information and you can refocus on whatever phenomena or whatever timescale you want,” Nousias explains.

Using an experimental setup that employed multiple external light sources and a spinning fan, the team demonstrated their method’s ability to allow for post-capture timescale selection. In their demonstration, they used photon timestamp data captured by a free-running SPAD camera to play back video of a rapidly spinning fan at both 1,000 frames per second and 250 billion frames per second.

The technology could have myriad applications.

“In biomedical imaging, you might want to be able to image across a huge range of timescales at which biological phenomena occur. For example, protein folding and binding happen across timescales from nanoseconds to milliseconds,” says Lindell. “In other applications, like mechanical inspection, maybe you’d like to image an engine or a turbine for many minutes or hours and then after collecting the data, zoom in to a timescale where an unexpected anomaly or failure occurs.”

In the case of self-driving cars, each vehicle may use an active imaging system like LIDAR to emit light pulses that can create potential interference with other systems on the road. However, the researchers say their technology could “turn this problem on its head” by capturing and using ambient photons. For example, they say it might be possible to create universal light sources that any car, robot or smartphone can use without requiring the explicit synchronization that is needed by today’s LIDAR systems.

Astronomy is another area that could see potential imaging advancements – including when it comes to studying phenomena such as fast radio bursts.

“Currently, there is a strong focus on pinpointing the optical counterparts of these fast radio bursts more precisely in their host galaxies. This is where the techniques developed by this group, particularly their innovative use of SPAD cameras, can be valuable,” says Suresh Sivanandam, interim director of the Dunlap Institute for Astronomy & Astrophysics and associate professor at the David A. Dunlap department of astronomy and astrophysics.

The researchers say that while the sensors that have the capability to timestamp photons already exist – it’s an emerging technology that’s been deployed on iPhones in their LIDAR and their proximity sensor – no one has used the photon timestamps in this way to enable this type of ultra-wideband, single-photon imaging.

“What we provide is a microscope for time,” Kutulakos says. “So, with the camera you record everything that happened and then you can go in and observe the world at imperceptibly fast timescales.

“Such capability can open up a new understanding of nature and the world around us."